State Snapshot Transfer (SST) at a glance

PXC uses a protocol called State Snapshot Transfer to provision a node joining an existing cluster with all the data it needs to synchronize. This is analogous to cloning a slave in asynchronous replication: you take a full backup of one node and copy it to the new one, while tracking the replication position of the backup.

PXC automates this process using scriptable SST methods. The most common of these methods is the xtrabackup-v2 method which is the default in PXC 5.6. Xtrabackup generally is more favored over other SST methods because it is non-blocking on the Donor node (the node contributing the backup).

The basic flow of this method is:

- The Joiner:

- joins the cluster

- Learns it needs a full SST and clobbers its local datadir (the SST will replace it)

- prepares for a state transfer by opening a socat on port 4444 (by default)

- The socat pipes the incoming files into the datadir/.sst directory

- The Donor:

- is picked by the cluster (could be configured or be based on WAN segments)

- starts a streaming Xtrabackup and pipes the output of that via socat to the Joiner on port 4444.

- Upon finishing its backup, sends an indication of this and the final Galera GTID of the backup is sent to the Joiner

- The Joiner:

- Records all changes from the Donor’s backup’s GTID forward in its gcache (and overflow pages, this is limited by available disk space)

- runs the –apply-log phase of Xtrabackup on the donor

- Moves the datadir/.sst directory contents into the datadir

- Starts mysqld

- Applies all the transactions it needs (Joining and Joined states just like IST does it)

- Moves to the ‘Synced’ state and is done.

There are a lot of moving pieces here, and nothing is really tuned by default. On larger clusters, SST can be quite scary because it may take hours or even days. Any failure can mean starting over again from the start.

This blog will concentrate on some ways to make a good dent in the time SST can take. Many of these methods are trade-offs and may not apply to your situations. Further, there may be other ways I haven’t thought of to speed things up, please share what you’ve found that works!

The Environment

I am testing SST on a PXC 5.6.24 cluster in AWS. The nodes are c3.4xlarge and the datadirs are RAID-0 over the two ephemeral SSD drives in that instance type. These instances are all in the same region.

My simulated application is using only node1 in the cluster and is sysbench OLTP with 200 tables with 1M rows each. This comes out to just under 50G of data. The test application runs on a separate server with 32 threads.

The PXC cluster itself is tuned to best practices for Innodb and Galera performance

Baseline

In my first test the cluster is a single member (receiving workload) and I am joining node2. This configuration is untuned for SST. I measured the time from when mysqld started on node2 until it entered the Synced state (i.e., fully caught up). In the log, it looked like this:

150724 15:59:24 mysqld_safe Starting mysqld daemon with databases from /var/lib/mysql

... lots of other output ...

2015-07-24 16:48:39 31084 [Note] WSREP: Shifting JOINED -> SYNCED (TO: 4647341)

Doing some math on the above, we find that the SST took 51 minutes to complete.

–use-memory

One of the first things I noticed was that the –apply-log step on the Joiner was very slow. Anyone who uses Xtrabackup a lot will know that –apply-log will be a lot faster if you give it some extra RAM to use while making the backup consistent via the –use-memory option. We can set this in our my.cnf like this:

[sst]

inno-apply-opts="--use-memory=20G"

The [sst] section is a special one understood only by the xtrabackup-v2 script. inno-apply-opts allows me to specify arguments to innobackupex when it runs.

Note that this change only applies to the Joiner (i.e., you don’t have to put it on all your nodes and restart them to take advantage of it).

This change immediately makes a huge improvement to our above scenario (node2 joining node1 under load) and the SST now takes just over 30 minutes.

wsrep_slave_threads

Another slow part of getting to Synced is how long it takes to apply transactions up to realtime after the backup is restored and in place on the Joiner. We can improve this throughput by increasing the number of apply threads on the Joiner to make better use of the CPU. Prior to this wsrep_slave_threads was set to 1, but if I increase this to 32 (there are 16 cores on this instance type) my SST now takes 25m 32s

Compression

xtrabackup-v2 supports adding a compression process into the datastream. On the Donor it compresses and on the Joiner it decompresses. This allows you to trade CPU for transfer speed. If your bottleneck turns out to be network transport and you have spare CPU, this can help a lot.

Further, I can use pigz instead of gzip to get parallel compression, but theoretically any compression utilization can work as long as it can compress and decompress standard input to standard output. I install the ‘pigz’ package on all my nodes and change my my.cnf like this:

[sst]

inno-apply-opts="--use-memory=20G"

compressor="pigz"

decompressor="pigz -d"

Both the Joiner and the Donor must have the respective decompressor and compressor settings or the SST will fail with a vague error message (not actually having pigz installed will do the same thing).

By adding compression, my SST is down to 21 minutes, but there’s a catch. My application performance starts to take a serious nose-dive during this test. Pigz is consuming most of the CPU on my Donor, which is also my primary application node. This may or may not hurt your application workload in the same way, but this emphasizes the importance of understanding (and measuring) the performance impact of SST has on your Donor nodes.

Dedicated donor

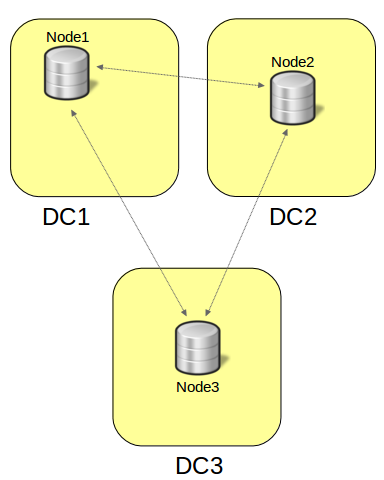

To alleviate the problem with the application, I now leave node2 up and spin up node3. Since I’m expecting node2 to normally not be receiving application traffic directly, I can configure node3 to prefer node2 as its donor like this:

[mysqld]

...

wsrep_sst_donor = node2,

When node3 starts, this setting instructs the cluster that node3 is the preferred donor, but if that’s not available, pick something else (that’s what the trailing comma means).

Donor nodes are permitted to fall behind in replication apply as needed without sending flow control. Sending application traffic to such a node may see an increase in the amount of stale data as well as certification failures for writes (not to mention the performance issues we saw above with node1). Since node2 is not getting application traffic, moving into the Donor state and doing an expensive SST with pigz compression should be relatively safe for the rest of the cluster (in this case, node1).

Even if you don’t have a dedicated donor, if you use a load balancer of some kind in front of your cluster, you may elect to consider Donor nodes as failing their health checks so application traffic is diverted during any state transfer.

When I brought up node3, with node2 as the donor, the SST time dropped to 18m 33s

Conclusion

Each of these tunings helped the SST speed, though the later adjustments maybe had less of a direct impact. Depending on your workload, database size, network and CPU available, your mileage may of course vary. Your tunings should vary accordingly, but also realize you may actually want to limit (and not increase) the speed of state transfers in some cases to avoid other problems. For example, I’ve seen several clusters get unstable during SST and the only explanation for this is the amount of network bandwidth consumed by the state transfer preventing the actual Galera communication between the nodes. Be sure to consider the overall state of production when tuning your SSTs.

The post Optimizing PXC Xtrabackup State Snapshot Transfer appeared first on MySQL Performance Blog.

Percona is glad to announce the release of Percona XtraDB Cluster 5.7.25-31.35 on February 28, 2018. Binaries are available from the downloads section or from our software repositories.

Percona is glad to announce the release of Percona XtraDB Cluster 5.7.25-31.35 on February 28, 2018. Binaries are available from the downloads section or from our software repositories.